Understanding your baseline first

Before running any landing page optimization tests, establish a reliable baseline. Most accounts do not have a stable enough conversion rate to run meaningful tests because they are mixing traffic sources, seasonal periods, and audience temperatures without accounting for those variables.

A reliable baseline requires: consistent traffic source (do not mix paid and organic unless testing that specifically), consistent audience temperature (do not mix retargeting and cold traffic), and enough volume (at minimum 1,000 sessions per variant, 100 conversions per variant for statistical significance). Without this foundation, your test results will be misleading.

What actually moves conversion rates

The factors that produce the largest landing page optimization lifts, in rough order of impact:

- Message match between ad and landing page. When the headline on the landing page directly echoes the ad the visitor clicked, conversion rates go up. This is the highest-use single change in most accounts we audit.

- Page load speed. Every additional second of load time drops conversion rate by 4 to 8%. On mobile, the impact is higher. Fix speed before testing copy.

- CTA clarity and position. Vague CTAs ('Learn more', 'Submit') underperform specific ones ('Get my free audit', 'See pricing'). The CTA needs to describe what happens next, not what the user should do.

- Social proof quality and relevance. Generic testimonials underperform testimonials from people who look like the visitor. Industry-specific proof and named testimonials from recognizable companies outperform generic star ratings.

- Form length. Each additional field reduces completion rate. Remove every field that does not directly affect your ability to qualify or follow up with the lead.

How to structure a testing program

A testing program for landing page optimization improvement needs structure to be useful:

- Generate hypotheses from data, not opinions. Use heatmaps (Hotjar, Microsoft Clarity), session recordings, and user surveys to identify where users are dropping off and why. Test solutions to observed problems, not random ideas.

- Prioritize by expected impact and ease of implementation. Use the PIE framework (Potential, Importance, Ease) to score test ideas and work through the highest-scoring ones first.

- Test one thing at a time. Multivariate testing requires 5 to 10x the traffic of A/B testing. For most accounts, clean A/B tests produce better insights faster.

- Define success criteria before running the test. What conversion rate improvement would be meaningful? What level of statistical confidence do you require? What is the minimum test duration? Define these before you start, not after the results come in.

Highest-impact elements to test

The elements most likely to produce a meaningful landing page optimization lift for most accounts:

- Hero headline. The first thing visitors read determines whether they keep reading. Test benefit-focused headlines against feature-focused ones, question formats, and specific claim headlines.

- Primary CTA button. Copy, color, size, and position all affect CTR on the CTA. Test the copy first, it has the most variance in results.

- Social proof format. Test star ratings vs. named testimonials vs. case study excerpts vs. client logos. The format that works best depends on your product category and audience sophistication.

- Form fields. Remove every field that is not essential and measure the impact on both form completion rate and lead quality. Shorter forms always complete at higher rates, the question is whether lead quality holds.

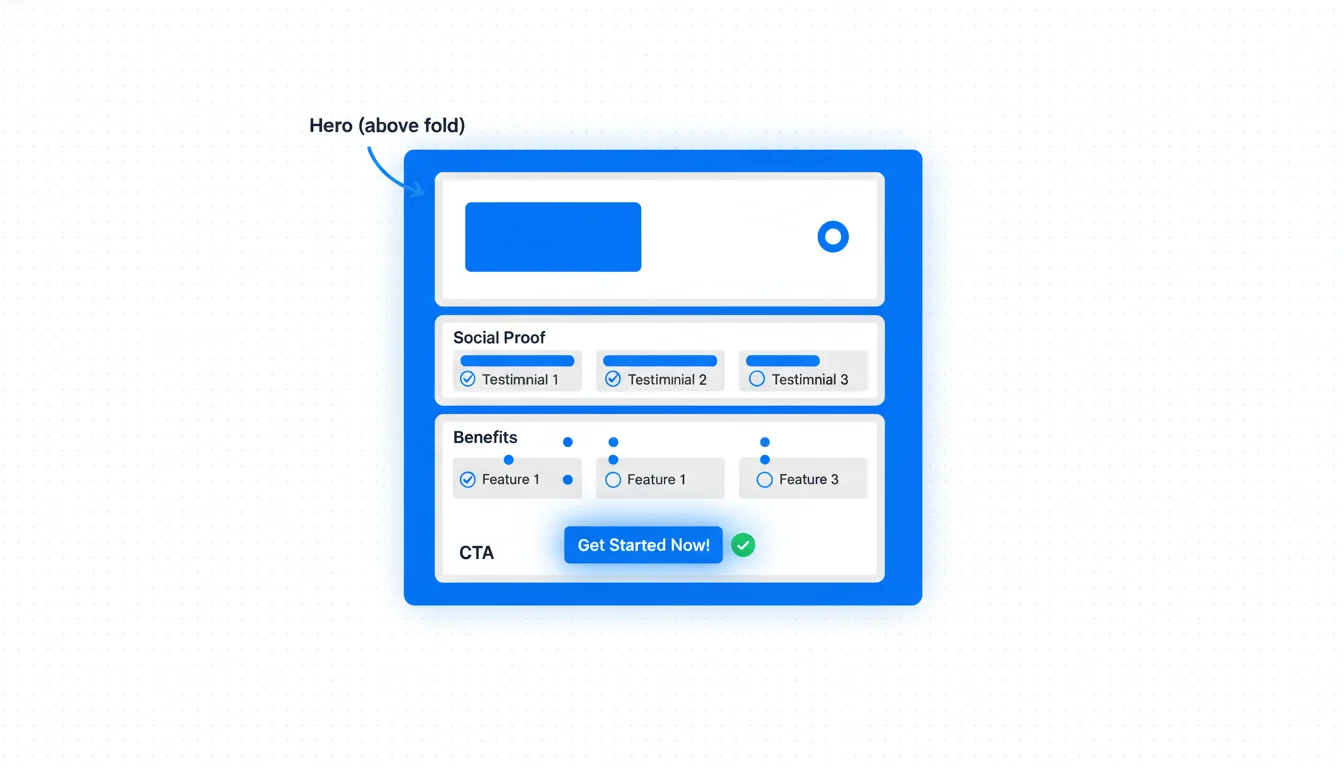

- Page structure and flow. Above-the-fold layout, section ordering, and whether the CTA appears before or after the proof section all affect conversion. Test structural changes with lower-fidelity wireframes before full design implementation.

Testing mistakes that waste time

The landing page optimization testing mistakes that consume resources without producing useful learning:

- Stopping tests too early. Seeing positive results at day 5 and calling the test is how you get false positives. Run tests to statistical significance (95% confidence minimum) with enough sample size (100+ conversions per variant).

- Testing too many variables at once. Changing headline, CTA, images, and form fields simultaneously means you cannot know what caused the result. One primary variable per test.

- Testing on insufficient traffic. A test running on 50 conversions per variant over 3 weeks produces unreliable results. Accept that low-traffic pages cannot be tested quickly, either drive more traffic or accept a longer test duration.

- Ignoring seasonality and traffic quality. A test that runs across a seasonal peak versus a normal period is not valid. Make sure your test and control variants run simultaneously and traffic quality is consistent across both.

Tools and infrastructure for CRO testing

The tools needed for a functional landing page optimization testing program:

- A/B testing platform: Google Optimize was sunset in 2023. Current options include VWO, Optimizely, Convert, and AB Tasty for enterprise; Unbounce or Instapage if you are building landing pages specifically for paid traffic.

- Heatmaps and session recording: Hotjar and Microsoft Clarity (free) are the two main options. Use both, they reveal different things about user behavior.

- Analytics: GA4 as the source of truth for conversion data. Make sure your test variants are tracked as custom dimensions so you can segment by variant in GA4 as well as your A/B testing tool.

- Qualitative research: User surveys and customer interviews are underused in CRO. Talking to 10 customers about why they converted (or almost did not) surfaces insights that no amount of quantitative data will find.