Shopify attribution in 2026: what still works

The shopify attribution model 2026 question is not which tool you pick, it is which lie you are willing to live with. Last-click died for good this year, and every replacement carries its own distortion. Platform-reported ROAS in Meta and Google overstates contribution by 40 to 70% because both platforms claim the same conversion. Data-driven attribution inside GA4 is better than last-click but still blind to offline touches and organic lift. Triple Whale and Northbeam smooth the reporting but invent a blended number that feels real and is not. Spreadsheets are honest and slow. Incrementality tests are the only thing that tells you what actually moved revenue, and most stores have never run one. The right setup for a Shopify brand under $500k a month is a DIY blended sheet plus a quarterly geo holdout test. Above that, one attribution platform plus the same test. Nobody needs all three.

- Last-click attribution is dead for ad spend decisions in 2026.

- Platform-reported, blended, and margin-adjusted ROAS each tell a different story.

- Triple Whale, Northbeam, and a DIY sheet all have tradeoffs worth naming out loud.

- Incrementality testing is the only method that survives Meta and Google both taking credit.

Why last-click attribution finally died in 2026

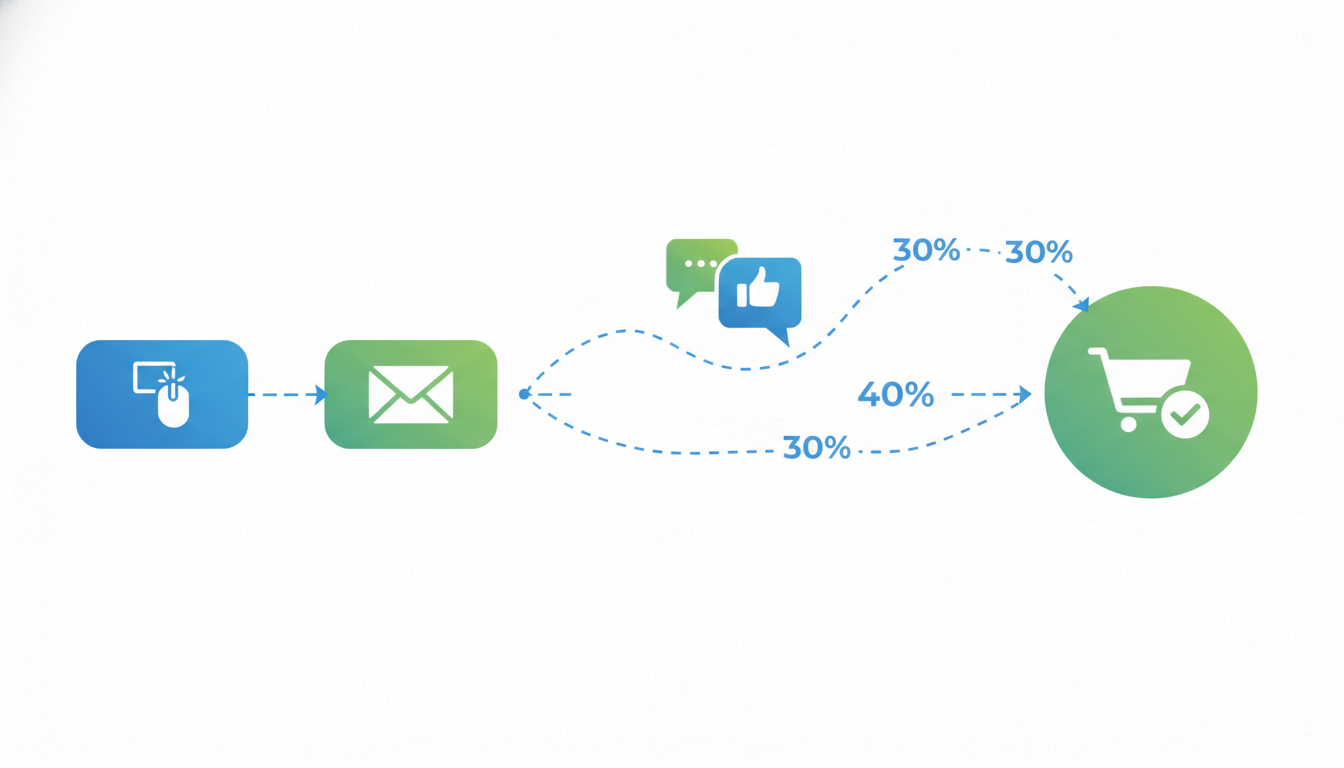

Last-click attribution was already a bad model in 2020. By 2026 it is actively dangerous. The last-click shopify report inside GA4 assigns 100% of revenue credit to whatever source sent the last non-direct click before purchase, which sounds reasonable until you look at what that source usually is. Direct traffic, branded search, and email. In other words, the channels that captured demand other channels created. Meta and YouTube and organic social do the heavy lifting at the top of the funnel, then last-click hands the trophy to the person who happened to be holding the receipt.

What changed in 2026 is the data quality collapse. iOS 18.4 landed in February and pushed another 11% of Safari sessions into full fingerprint blocking. Chrome finally shipped third-party cookie deprecation for 100% of users in March after four years of delays. Firefox tightened bounce tracking the same month. What that means for Shopify stores is that your last-click report now attributes 30 to 40% of purchases to "direct" traffic that was actually paid social, and you have no way to unmix it without server-side tracking plus a model that guesses. If you are still making budget decisions off a last-click GA4 dashboard, you are basically flipping coins. Worse than guessing, because the numbers look precise and are wrong in a consistent direction.

The short version of what to do with last-click in 2026. Stop using it for spend decisions. Keep it as a sanity check for branded search and email, because those channels it still measures cleanly. For paid media, move to a data-driven model or a blended view, and validate quarterly with an incrementality test. Every attribution conversation from here is about which flavor of less-wrong you accept.

The three attribution models that actually work now

Three models survived the 2026 data collapse. None of them are perfect. All three are useful if you know what they measure and what they miss.

- Data-driven attribution (DDA) in GA4 and Google Ads. Google's data-driven attribution documentation explains the model itself. It uses a shapley-value-ish algorithm to distribute credit across the touchpoints in a converted user's path. Better than last-click by a mile, because it actually weights every touch. Still blind to anything outside Google's graph, which means Meta, TikTok, and organic social barely exist to it. Useful for Google Ads budget decisions inside the Google ecosystem. Useless as a blended source of truth.

- Platform-reported attribution, which is Meta and Google each running their own probabilistic model off their own pixel data. Both platforms claim the same purchase, so reported ROAS inflates by 40 to 70% on average. You cannot take the two numbers and add them. The platforms know this and do not fix it because every reported conversion justifies more spend. Useful inside each platform for campaign-level optimization. Dangerous if you trust the sum.

- Blended attribution, where you take total revenue, subtract organic and email, and divide the rest by total paid spend to get one blended ROAS. Honest, slow, and insensitive to which channel did what. Good for the board deck and the CEO. Bad for deciding whether to move $10k from Meta to Google next week.

Most operators pick one and stop thinking. The useful move is to run all three in parallel, compare weekly, trust the one closest to the truth for the decision at hand. Budget pacing? Platform-reported. Total marketing efficiency? Blended. Which Google campaign to scale? DDA. Different model for different decision. Nobody tells you this part because it is more work to explain than "use Triple Whale."

Platform-reported vs blended vs margin-adjusted

Every ROAS number you see on a Shopify store belongs to one of three frames. Mixing them up is the single most common reason a brand thinks it is profitable and is not.

- Platform-reported ROAS is what Meta and Google tell you, based on their own pixel and attribution windows. Inflated by double-counting. Useful for intra-platform decisions.

- Blended ROAS is total paid-driven revenue divided by total paid spend. Removes the double-count. Masks channel-level performance.

- Margin-adjusted ROAS is blended ROAS recalculated on gross margin instead of revenue. For a store with 60% gross margin, a 3.0 blended ROAS is actually 1.8 margin ROAS. This is the number that tells you if you are making money.

Most stores never calculate the third one. They run "profitable" campaigns at 2.5 blended ROAS on a product that has 45% margin, and are genuinely surprised when the P&L comes in negative at the end of the quarter. Blended ROAS measures revenue per dollar of ad spend, not profit. Revenue has to clear cost of goods, shipping, returns, discounts, and overhead before any of it lands on the P&L. Margin ROAS adjusts for at least the first one.

A practical target set for a Shopify DTC brand with 55 to 65% gross margin: platform-reported Meta ROAS above 2.5, blended ROAS above 2.0, margin ROAS above 1.3. Below margin ROAS 1.0 and you are paying to ship product to customers at a loss, regardless of what Meta's dashboard says. We see this pattern in about a third of audits, because the founder was trusting the 4.2 reported Meta ROAS and never calculated the other two frames.

Attribution software: Triple Whale vs Northbeam vs native

The shopify attribution software market in 2026 has narrowed to three real options. Triple Whale, Northbeam, and rolling your own out of GA4 plus a spreadsheet. Each one makes different tradeoffs and the wrong fit for your store size wastes $500 to $2,000 a month for nothing.

Triple Whale. Starts around $129 a month for stores under $1M annual, scales to $1,000+ at enterprise. UI is the best in the category. Pulls Meta, Google, TikTok, Klaviyo, and Shopify into one dashboard, runs its own pixel for first-party tracking, gives you a blended ROAS number by default. The catch: the blended number is a model, not a measurement. It weights touches based on Triple Whale's own heuristic, which is proprietary, which means you are trusting their math without being able to audit it. Works well for founders who want one number to look at every morning. Less useful for analysts who want to understand how the number was built.

Northbeam. Starts around $1,000 a month, aimed at stores doing $500k+ in monthly revenue. More sophisticated model than Triple Whale, uses marketing mix modeling layered on click attribution, does a better job of giving credit to brand-level channels like YouTube and podcasts. Also proprietary, also expensive. The right pick for a brand spending $200k+ a month in paid media and running creative across five or more platforms. Overkill for a store doing $80k a month on Meta and Google only.

Native (Shopify analytics + GA4 + spreadsheet). Free in tool cost, expensive in time. Shopify's native reporting via Shopify analytics has gotten better. It now shows marketing-attributed orders per channel using Shopify's own first-party pixel, which dodges most of the iOS and cookie problems. Pair that with GA4 DDA for in-ecosystem Google data, a simple Google Sheet that blends both against ad spend pulled from the platform UIs, and you have a decent enough view for a store under $500k a month. Takes 30 minutes a week to update. Tedious. Also the most honest number in the stack, because you built it yourself and you know exactly what assumption sits under each cell.

The choice tree we use with clients: under $500k a month, DIY plus Shopify analytics. Between $500k and $2M, Triple Whale is a reasonable upgrade. Above $2M, Northbeam starts earning its keep. Nobody needs both. If somebody sold you on "we use both for redundancy," you are paying double for the same wrong answer.

The DIY Shopify spreadsheet approach for stores under $500k/mo

The spreadsheet that works for ecommerce attribution shopify setups at this size has five columns, one tab per week, and takes half an hour to update every Monday morning. Nothing fancy. The point is not sophistication, it is honesty.

Columns:

- Channel (Meta, Google Search, Google Shopping, TikTok, email, organic social, organic search, direct)

- Platform-reported revenue (pulled from each platform's UI for the week)

- Shopify-attributed orders (pulled from Shopify's marketing report, filtered to that week and channel)

- Ad spend (pulled from each platform)

- Reconciled revenue (the lower of platform-reported or Shopify-attributed, rounded down)

Reconciled revenue divided by ad spend gives you a conservative ROAS per channel. It is always lower than what Meta reports. That is the point. The gap between platform-reported and reconciled is the over-claim, and watching that gap week over week tells you more about your tracking health than any dashboard.

Add two derived cells at the bottom: blended ROAS (total reconciled revenue divided by total ad spend) and margin ROAS (blended ROAS times your gross margin percentage). Those two numbers drive the budget conversation every Monday. Which channel is above margin ROAS 1.3? Scale. Which is below 0.8? Pause or fix. Which is in between? Test a change, measure again next week.

Stores under $500k a month do not need attribution software. They need discipline. The spreadsheet forces it because you cannot hide behind a proprietary blended number somebody else built. Every cell has a source you can audit.

Incrementality testing: the truth cop of attribution

Every attribution model above is a model. Incrementality testing is a measurement. That is the whole difference, and it is why the answer to "which attribution model is correct" is always "none of them, run a test." The model gives you a number. The test tells you whether the model is lying.

The simplest form that works on Shopify is a geo holdout test. Pick two or three states with similar historical performance, turn off Meta ads in those states for four weeks while keeping them on elsewhere, compare revenue in the holdout geos against control. If revenue in the holdout drops 18% relative to control, that 18% is the true incremental lift from Meta. If revenue drops 4%, Meta was getting credit for purchases that were going to happen anyway. We have run this across about 20 Shopify brands in 2025 and 2026. True incremental lift from Meta typically lands at 40 to 60% of what Meta reports. In plain English, Meta claims $100k in revenue and actually drove $50k. The other $50k would have happened regardless.

That does not mean Meta is worthless. It means the reported ROAS is 2x the real ROAS, and you should plan spend accordingly. A Meta campaign showing 3.5 ROAS platform-reported is probably doing 1.75 real ROAS. If your margin ROAS threshold is 1.3, it is still profitable. If it is 2.0, it is not. Without the test you have no way to know.

Run a geo test once a quarter on your biggest channel. Write the result in a Google Doc. Use the incrementality coefficient (the ratio between true lift and reported revenue) to adjust every attribution report for the next 90 days. That is the entire system. Triple Whale and Northbeam both offer synthetic incrementality features, better than nothing. Neither replaces an actual holdout test because both are still models on the same biased click data.

Reading attribution data without fooling yourself

The biggest attribution mistake operators make is reading the right model at the wrong granularity. Picking the wrong model is a smaller problem than reading a correct model through a distorting lens. Three rules we apply on every audit:

- Never make a budget decision off less than 7 days of data. Weekly seasonality plus attribution lag (Meta's 7-day click window, Google's 30-day) means daily ROAS numbers are pure noise. Weekly is the minimum honest unit.

- Always compare against a baseline. "Meta ROAS was 3.2 this week" means nothing. "Meta ROAS was 3.2 this week vs 2.9 last week and 3.4 trailing 4-week average" means something. Without the comparison you are reacting to noise.

- Segment by new vs returning customer before you draw conclusions. Meta overclaims on returning customers because they were already coming back. Split the ROAS into new-customer ROAS and returning-customer ROAS. The new-customer number is the one that tells you if the top of the funnel is working. Shopify's customer-type segmentation in the marketing report makes this easy, and almost nobody uses it.

The honest reader checks all three before touching a budget. The optimistic reader sees the 3.2 ROAS, raises the daily budget by 40%, and watches CPA spike a week later because the scale broke the tracking. We see this sequence monthly. The tool is fine. The reading is the problem.

One more thing that does not show up in any dashboard. Attribution data tells you what happened. It does not tell you what to do. A campaign with 2.1 reported ROAS and 1.2 margin ROAS might still be the right campaign to keep running if it is your only source of new-customer acquisition and LTV-to-CAC on that cohort is 4.0 over 12 months. A campaign with 4.5 reported ROAS on 100% returning customers is a nice-to-have that is not actually growing the business. Attribution plus LTV context is what drives real decisions. Attribution alone still leaves the hard question on the table.

Frequently asked questions

Is last-click attribution completely dead in 2026?

Can I use Triple Whale or Northbeam as my only attribution source?

How much does incrementality testing cost?

What is the difference between data-driven and multi-touch attribution?

Should I trust Meta's attribution or Google's attribution more?

How often should I rerun an incrementality test?

The shopify attribution model 2026 question is really a budget decision question in disguise. Pick the model that matches the decision you are actually trying to make. Last-click for brand and email. Data-driven for Google in-platform. Blended and margin-adjusted for total marketing efficiency. Platform-reported for intra-platform optimization only, never summed across platforms. Add a quarterly incrementality test to catch the model when it drifts, which it always eventually does. Skip the premium attribution tool until you cross $500k a month in revenue, because below that the DIY spreadsheet is more honest anyway. Above $2M Northbeam starts earning its keep. Between those two, Triple Whale is a reasonable bet if you know you are buying a smoother dashboard and not a truer number. That is the honest version. Nobody in the attribution vendor world will tell you this, because the whole category depends on founders thinking one tool solves the measurement problem. It does not. A model plus a test plus a reconciled spreadsheet does.

Get a full X-ray of your ad account

Paste your Meta and Google Ads. See exactly where signal is leaking. Free. 60 seconds.