Triple Whale vs Northbeam: attribution for Shopify

Triple Whale vs Northbeam is the attribution comparison most Shopify founders run at exactly the wrong revenue stage, usually right after a $1,000 renewal invoice lands, and almost always with the wrong frame. The honest answer in 2026 is that both tools solve the same problem with different tradeoffs, and neither one is a source of truth. Triple Whale wins on operator UX, price, and speed to first dashboard. Northbeam wins on model sophistication, brand-channel credit, and the seriousness of its underlying math. Neither one runs incrementality the way a geo holdout does, which means the number both dashboards give you is a smoothed model, not a measurement. Picking the wrong one wastes $500 to $12,000 a year and a lot of analyst patience. Best to size the decision to the actual store, not the pitch deck.

- Triple Whale fits stores between $500k and $2M monthly GMV that want one clean dashboard.

- Northbeam fits stores above $2M monthly GMV running brand-level channels like YouTube and podcasts.

- Neither tool replaces incrementality testing, both run on biased click data.

- Nobody needs both. If your agency says "we use both for redundancy," you are paying twice for the same wrong answer.

What attribution software actually does (and does not do)

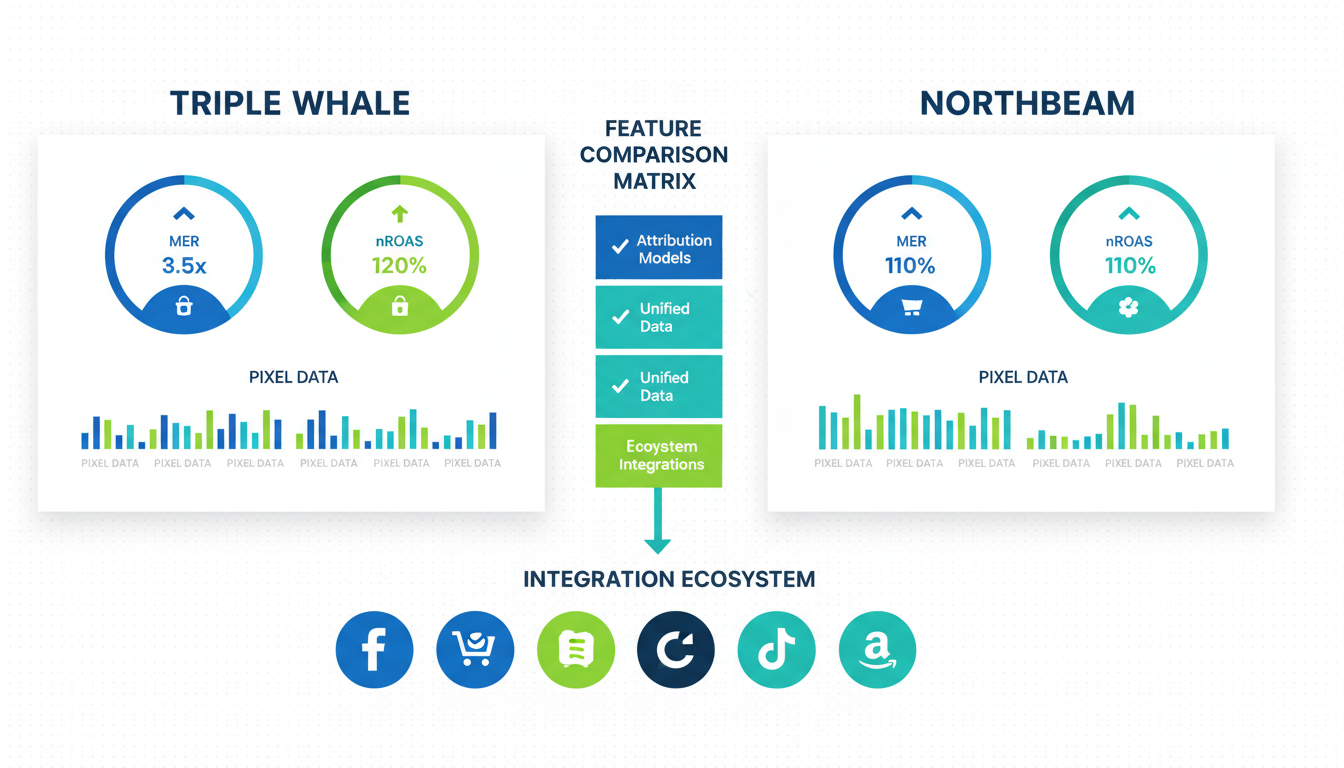

Every attribution software ecommerce vendor pitches the same thing: one dashboard, one blended number, one version of the truth. The reality is slimmer. Triple Whale and Northbeam both pull ad spend from Meta, Google, TikTok, Klaviyo, and Shopify into one view, run their own pixel for first-party click tracking, and output a blended ROAS that deduplicates platform over-claim. That is the real job. Everything else on the feature list is scaffolding.

What they do not do, and this is the part that matters: measure incrementality. Both tools run a model on click data. The click data is already biased, because Meta and Google both fire pixels on the same conversion and both claim credit. The model smooths the over-claim, which is useful. The model does not tell you which conversions would have happened without ads, which is the question you need answered when you are deciding where to put next month's $80k. That question is answered by a geo holdout test, which takes four weeks and costs 5 to 15% of ad spend in the period. Neither tool gives you that number without the test.

The short version: treat both as a better blended dashboard, not as truth. The shopify attribution app comparison question gets cleaner once you drop the expectation that either vendor sells measurement. They sell modeling. Modeling plus a quarterly incrementality test beats modeling alone.

Pricing reality at $100k, $500k, $2M/mo GMV

Pricing is where the tw vs nb shopify comparison gets messy, because both vendors price on a sliding scale tied to ad spend and store revenue, and neither posts a clean rate card for enterprise. The numbers below are from live sales conversations in Q1 2026, not marketing pages, because the marketing pages are deliberately vague.

Monthly cost by store GMV, 2026:

| Monthly GMV | Triple Whale | Northbeam | Notes |

|---|---|---|---|

| $100k | $129 | n/a | Northbeam's floor starts above this scale |

| $250k | $200 | n/a | Northbeam typically declines under $500k |

| $500k | $400 | $1,000 | Northbeam's entry tier |

| $1M | $600 | $1,500 | Both scale on ad spend, not just GMV |

| $2M | $900 | $2,500 | The honest crossover zone |

| $5M | $1,500 | $4,500 | Northbeam pulls ahead on modeling |

| $10M+ | Custom | Custom | Both quote enterprise, both negotiable |

Two things jump out. First, Triple Whale is meaningfully cheaper across every band, usually 2x to 3x cheaper than Northbeam for equivalent store size. Second, Northbeam does not sell below $500k GMV in most cases. The real price comparison only exists in the overlap band, which is $500k to $10M monthly GMV.

The trap to watch for: both vendors advertise "starts at" pricing based on the cheapest configuration, which usually excludes the features you actually need. Triple Whale's $129 tier does not include creative analytics, customer journey reporting, or survey-based post-purchase attribution. Northbeam's $1,000 tier does not include marketing mix modeling, which is the whole reason you would buy Northbeam in the first place. Always price the tier that includes the features you were sold on. We see stores land bills 50% above their expectation because they trusted the landing page number instead of the sales quote.

Data model: how each one calculates blended ROAS differently

The data model is the biggest technical difference between the two tools, and most comparisons skip it because it is genuinely complicated. Both tools produce a blended ROAS number. The numbers almost never match on the same store, and the difference sometimes runs 25 to 40%. Understanding why is the difference between trusting the dashboard and being trusted by it.

Triple Whale runs a heuristic click-based model, layered with post-purchase survey data when the store enables the Sonar feature. Every tracked session gets a click path, the model assigns credit using a weighted algorithm that favors the most recent paid touch, and survey responses get stitched in to adjust for self-reported attribution. It is a click-first model with a survey patch. Triple Whale's documentation covers setup but stays deliberately vague on the actual math, which is fine if you trust the vendor and a problem when you are trying to debug why two dashboards disagree.

Northbeam runs marketing mix modeling layered on top of multi-touch click attribution. The MMM component looks at historical spend and revenue patterns across channels over 12+ months and estimates baseline contribution per channel, independent of click data. Northbeam's approach stitches click paths the same way Triple Whale does, but weights brand-level channels (YouTube, podcasts, OTT, influencer) more generously because MMM picks up their lift even when the click does not close the loop. For a brand spending $50k a month on YouTube, that difference is the entire reason Northbeam exists.

The practical implication: Triple Whale tends to under-credit top-of-funnel channels that do not drive the last click. Northbeam tends to over-credit them, because MMM is generous and click data cannot validate the lift without an incrementality test. Which one you accept depends on channel mix. If 90% of your spend is Meta and Google, Triple Whale's number is probably closer to reality. If 30%+ is YouTube, podcasts, OTT, or influencer, Northbeam's number is probably closer. We see a 30% gap between the two tools on the same store weekly, almost always explained by this one structural difference.

Dashboard and operator experience

Daily operator experience is where Triple Whale's lead is widest, and it is the reason the tool won the low-to-mid market despite a weaker model. The founder logging in at 9am wants three numbers (yesterday's spend, yesterday's revenue, blended ROAS) on one screen, and Triple Whale puts them there in a clean card layout that loads in 2 seconds. Northbeam's dashboard is denser, takes longer to scan. For the operator doing 8 other things, dashboard scannability is a real feature.

Triple Whale's strengths in operator UX:

- Morning pulse view (today + yesterday + trailing 7) on the login screen.

- Creative analytics overlay for Meta ads reporting without leaving the tool.

- Slack integration sends daily and weekly summaries directly to the channel.

- Sonar post-purchase survey ships with sensible defaults, no configuration required.

Northbeam's strengths are analyst-facing rather than operator-facing:

- Channel breakdown by impression-based vs click-based vs MMM contribution on one view.

- Statistical confidence intervals on channel performance, which Triple Whale does not show.

- Historical model version control, so you see how attribution shifted after a model update.

- Deeper filtering on cohort, campaign, ad set, and creative simultaneously.

The operator experience trade: Triple Whale wins for founders and operators, Northbeam wins for analysts and data teams. If the person logging in is also managing ads, responding to customer service, and scheduling social, Triple Whale gets used. If the person is a full-time growth analyst with three hours a day for reports, Northbeam gets used. Buying the wrong tool for the wrong user is how $1,500 a month gets spent on a dashboard nobody opens.

Incrementality testing: where each one falls short

Both tools now ship incrementality features. Triple Whale calls it Conversion Lift, Northbeam calls it Incrementality. Both are useful as directional signals. Neither replaces a real geo holdout test, and understanding why is important before you let the feature talk you out of running the test properly.

The synthetic incrementality feature in both tools compares cohorts of users exposed vs not exposed to a channel, using the click data already in the system. It is modeled incrementality, not measured. The problem is that click data already has the same bias both tools are supposedly correcting for. Meta fires a pixel on people who saw an ad but did not click, Triple Whale and Northbeam count that as exposure, and the exposed vs unexposed comparison is built on top of the same click bias the model is trying to fix. Turtles all the way down.

A real incrementality test is geo-based. You pick two or three states with matched historical performance, turn off Meta ads in the holdout states for four weeks while keeping them on in control, and compare revenue. The revenue gap is the true incremental lift, because the holdout states were isolated from the ad exposure. Neither Triple Whale nor Northbeam runs this test natively. Both tools give you a dashboard to read the results once the test is done, which is useful, but the test itself requires a campaign structure change in Meta or Google that happens outside the tool. Meta's Conversion Lift documentation covers the platform-run version, which is a different flavor of the same idea.

The gap that matters: in 20+ geo holdout tests we have run across Shopify brands in 2025 and 2026, true incremental lift from Meta landed at 40 to 60% of reported. Triple Whale's synthetic incrementality typically shows lift at 70 to 85% of reported revenue. Northbeam's lands at 65 to 80%. Both overestimate real lift compared to a proper geo test, because both run on the same biased data. Run the geo test once a quarter regardless of which tool you pick. The synthetic feature is a sanity check, not a replacement.

Which DTC categories fit each tool

Category fit matters more than operators expect, because model strengths show up differently depending on the channel mix a category typically runs. A few patterns we see consistently across audits:

- Beauty and skincare ($500k to $5M GMV): Triple Whale usually wins. Meta-heavy with some TikTok and Google Shopping, which is where Triple Whale's click-based model performs cleanest. Sonar picks up word-of-mouth and influencer effects.

- Apparel and accessories ($1M to $10M GMV): Mixed. If the brand runs heavy YouTube and influencer spend, Northbeam's MMM layer pulls its weight. If it is Meta and Google only, Triple Whale is cheaper for equivalent insight.

- Supplements and wellness ($500k to $20M GMV): Northbeam above $2M GMV. Longer consideration cycles and more cross-channel touches before purchase is where MMM shows its value. Below $2M, usually Meta-only, so Triple Whale.

- Food and beverage ($1M to $10M GMV): Northbeam wins for brands spending on OTT, podcasts, and sponsorships. Triple Whale wins for D2C food brands running pure Meta and Google paid.

- Home goods and furniture ($2M to $50M GMV): Northbeam. Long consideration cycles, high AOV, and brand-building spend favor MMM over click-based.

- Fashion and luxury ($5M+ GMV): Northbeam, usually. Brand premium and influencer spend are material enough that MMM credit allocation changes budget decisions.

- Subscription-first brands ($1M to $20M GMV): Triple Whale. The LTV module is stronger out of the box, and most subscription brands are Meta-first anyway.

The pattern across categories: click-heavy, paid-social-dominant brands map to Triple Whale. Brand-channel-heavy, long-consideration brands map to Northbeam. The middle is contested, and the right call depends more on channel mix than category label.

Migration and export: can you actually leave?

Migration between attribution tools is a topic neither vendor advertises honestly. Historical attribution data does not export between tools because each model is proprietary. Your 18 months of Triple Whale history cannot rebuild inside Northbeam, because Northbeam's model would produce different numbers on the same raw data. You lose the trend line the day you switch.

What exports: raw click data, ad spend, order-level revenue, channel attribution at the order level under the old model. What does not: the blended ROAS time series, the modeled incrementality, channel baselines, cohort LTV projections, anything the proprietary model produced. You can rebuild the raw history but not the modeled history.

Practical migration timeline, 4 weeks:

- Week 1: export raw data, audit flow-attribution mappings, inventory custom reports and dashboards to rebuild.

- Week 2: stand up the new tool, connect Meta, Google, TikTok, Klaviyo, Shopify, validate that spend pulls match platform UIs to the dollar.

- Week 3: run both tools in parallel for 14 days, compare blended ROAS daily, document the gap and which channel each model is explaining differently.

- Week 4: migrate the reporting stack (Slack summaries, internal dashboards, Looker integrations) to the new source, confirm stakeholders can still get the numbers they need.

Agency-managed migration runs $3k to $8k depending on complexity. DIY takes 40 to 80 hours of operator time, which at any reasonable hourly rate is usually more expensive than hiring help. The deeper cost is the trend line loss. If the board deck quotes YoY blended ROAS, migration resets the time series. Best to migrate at a natural year boundary (Q1 or fiscal year start), not mid-quarter when everybody is already watching the number.

The honest answer on whether migration is worth it: below $2M monthly GMV, cost-driven migration rarely pays back inside a 12-month window, because the migration cost and trend reset usually exceed the monthly savings. Capability-driven migration (you actually need MMM, you actually outgrew Triple Whale) pays back faster because the capability drives better budget decisions, not just lower software cost. Pick once, build right, revisit only when store size or channel mix changes materially.

Frequently asked questions

Is Triple Whale or Northbeam better for a store doing $1M a month?

Can I use Triple Whale or Northbeam as my only source of truth?

How much does switching between Triple Whale and Northbeam cost?

Does Northbeam's marketing mix modeling actually work?

What is the difference between Triple Whale's Sonar and a real survey tool?

Should I run both Triple Whale and Northbeam for redundancy?

Triple Whale vs Northbeam is really a store-size and channel-mix question in disguise. Below $500k monthly GMV you do not need either tool, a DIY reconciled spreadsheet and Shopify's native marketing report will do the job more honestly. Between $500k and $2M, Triple Whale earns its keep as a cleaner dashboard and the price gap vs Northbeam is hard to justify unless your channel mix already tilts brand-heavy. Above $2M, the math starts favoring Northbeam if you are running YouTube, podcasts, OTT, or influencer at material scale, and the MMM layer begins picking up lift the click model misses. Neither tool is a source of truth, and neither one replaces a quarterly geo holdout test. Best to pick the one that matches your operator reality, run the incrementality test to validate the number, and revisit only when the store size or channel mix changes enough to matter.

Get a full X-ray of your ad account

Paste your Meta and Google Ads. See exactly where signal is leaking. Free. 60 seconds.